The only way to understand personal differences is to talk about them.

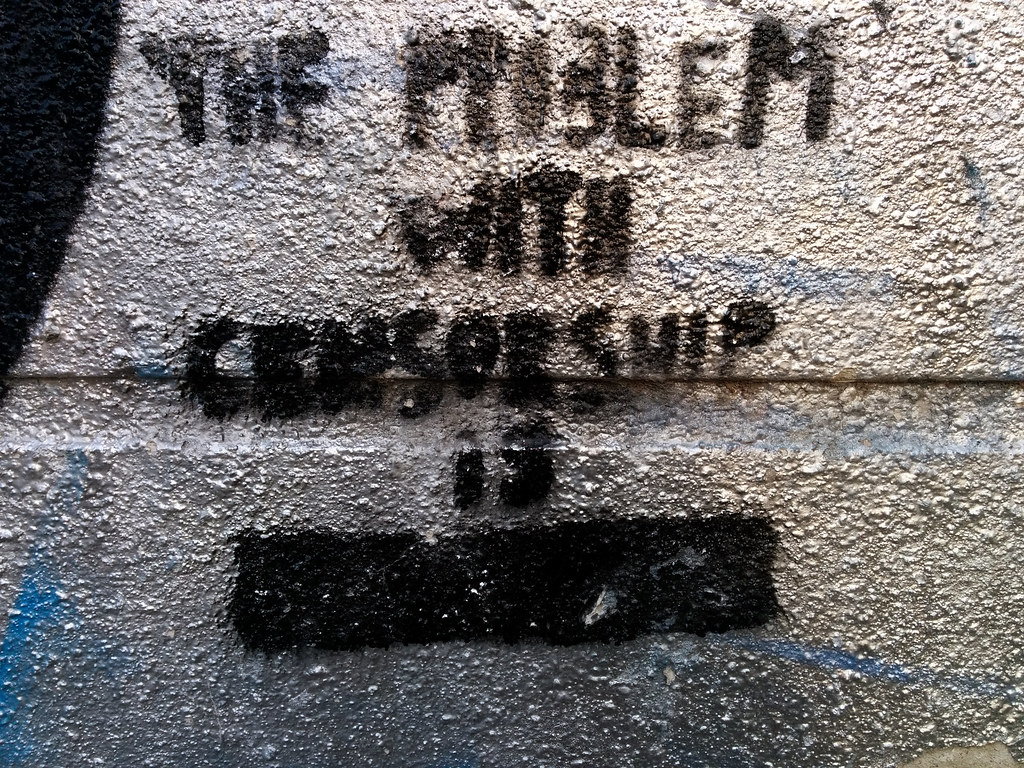

Everybody condemns censorship. The Founding Fathers prohibited the government from doing it. But, censorship of social media is a different story. It’s not covered by the First Amendment. The Constitution applies only to government.

Comprehensive Generic Censorship

Censoring social media is an issue for private companies. They’re caught in a dilemma—if they censor an inflammatory post, that’s intrusive; if they don’t censor such a post; they’re condoning the content. Companies are just there for the profit. They didn’t sign up for being socially responsible.

Private companies can do whatever they want (so long as it is legal), they just have to decide if their customers will accept their decision. What one customer might think is offensive or harmful to the community, another may not. But private companies also censor posts that they believe are detrimental to their business. Should they be allowed to restrict fair competition? Should Facebook be allowed to censor any mention of LinkedIn, WordPress, Nextdoor, or Medium? They can, and do. It’s not illegal.

But social media companies need to take some action that addresses the real problem. Their customers demand it (just so long as it isn’t them being censored). If the companies don’t take any action, eventually the government will step in and enact laws and promulge regulations. Then the companies will have to deal with the offensive content, AND the laws and regulations, AND the continual government monitoring of what they’re doing.

So most social media companies take a strict, authoritarian approach. They unilaterally decide what to censor, create rules to apply their decisions to all users, and employ moderators to enforce the rules. One size fits all. Sometimes they explain their decisions but usually they just post a notice of their decree. They can do that, it’s their company.

The problem is that one size DOES NOT fit all. People have different perspectives and expectations. What’s offensive to one person may be a worthy topic of discussion for another. Some people don’t want to see posts about religion or politics or money or gun ownership, and others do. In fact, those are the posts they want to read about. That’s one way to learn things; read what other people write about.

And that is where censorship on social media sites goes so horribly wrong. By not talking about the problems and differences we have, nothing will ever get better. Have we learned nothing from Dr Phil? In the 1950s, we were taught not to discuss politics and religion. Look where that got us. Now, we can’t talk about either without a fight breaking out.

The current approach to censorship of social media guarantees that we’ll be doomed to live in a mind-controlled society where the powerful tell us what to think.

Personalized Targeted Censorship

So here’s the idea. Instead of applying comprehensive censorship rules on all users regardless of their sensitivities and information needs, personalize what each user gets to see. Allow individuals to censor the content they don’t want to see without impacting the entire community. It’s like turning off a TV show you don’t want to watch without removing the show from the entire station.

This isn’t a difficult concept. Social media platforms already target content to customers through the use of user-specified interests, hashtags, and the like. This personalized-censorship approach would involve using the same strategy for censorship. Customers would be involved in deciding what gets censored from their own feed. The process would be automated, like topics on social media are now. The censorship would be transparent to both posters and readers. There would be no backroom decisions by anonymous moderators.

Here’s how the five components of a personalized-censorship system might work:

- Illegality Detection

- Post Characterization

- Post Filtering

- Bully Deterrence

- “Karen” Deterrence

Illegality Detection

SOCIAL MEDIA PLATFORMS would be solely responsible for filtering out illegalities. It’s a task that is not without its challenges. After all, interpreting all the finer shades of illegality is why we have all those highly paid lawyers and judges.

Social medial companies have immunity from lawsuits under Section 230 of the Communications Decency Act of 1996. This Act was written to address pornography. Section 230 was meant to let information service providers say “it’s not my fault” when publishing content originated by others. All they have to do is provide an even marginally-plausible rationale in good faith. Usually the rationale is freedom-of-speech, despite many counter-arguments. That’s why some people just want to repeal the Section and eliminate the loophole, which would also have its consequences.

Section 230 gives Social-media companies a sense of security they, perhaps, don’t really have. Whether they censor some content, or fail to censor it, has no legal consequent for them. But, that infuriates a portion of their customers—either the ones whose posts are censored or the ones who believe the posts should be censored. The companies would benefit from another way of saying “it’s not my fault.” This customer-based censorship system would provide that benefit.

Social-media companies wouldn’t be completely off the hook, though. They would still have to detect and censor illegalities—threatening and fomenting violence and other illegal acts. They do that now. Even so, the major items that social-media companies would have to address to implement the system are: deciding what parameters to enable customers to censor and filter by; realign moderators away from censorship to focus on bullying; and resisting the urge to control the system for their own purposes.

Post Characterization

POSTERS would be required to characterize their post, message, or comment by clicking on appropriate boxes for potentially-objectionable attributes, such as mentions of: politics, violence, illegal drugs, religion, abortion, guns, conspiracy theories, profanity, sexual content, unpopular opinions, and so on. The platform would decide what topics their readers might want to censor. Each post would also be characterized for criteria not ordinarily censored but some readers might like to avoid, such as long posts, bandwidth-consuming images/videos and documents, links, advertising and solicitation, mathematics, computer code, polls, and the like. Some of these criteria could be automated instead of having the poster providing manual input.

There would, of course, be posters who wouldn’t characterize their posts appropriately, if at all, because they believe they “have the right not to.” They probably wouldn’t even check a “nothing objectionable” box if there were one. The solution would be to let readers report them for “failure to appropriately characterize objectionable post” and impose an automatic ban once enough of their posts have been reported as such. This wouldn’t require much, if any, moderator intervention since the banning would be based on a pattern of infractions rather than a single post.

Post Filtering

READERS would be allowed to set their preferences for what post characteristics they want to censor. They could change these preferences at any time, just as they can change their personal interests now. Readers who wanted to see all posts (e.g., law enforcement) could turn off all filters thus having minimal (illegalities, only) censorship.

The combination of post characterization and filtering should provide adequate censorship for those who desire it and freedom-of-speech for those who want to offer or learn of ideas outside the mainstream. The effort would be widely distributed so neither the social media platform nor its customers would bear an unreasonable burden. All of these types of things (e.g., checking boxes to post on social media) are currently being done so no great technological leaps would be required.

Bully Deterrence

That brings us to the big issue that is hard to come to grips with—bullying. Anybody can be a bully regardless of age, sex, religion, or political ideology. Being polite doesn’t seem to be taught by some parents as it was generations ago or maybe peer groups are exerting more of a negative influence now. It is also likely that the anonymity and remoteness of social media bring out the worst in some of us.

READERS would be encouraged to report posts for types of bullying, such as:

- Personal attacks, including name calling, insults, mocking, and threats of harm or retribution

- Attacks based on race, culture, or nationality

- Attacks based on age, disabilities, or medical conditions

- Attacks based on sex or gender issues

- Misinformation

- Failure to appropriately characterize an objectionable post

When a bully is reported for an offensive post, what usually happens is that the complaint is given to a moderator to decide what, if any, action should be taken. Sometimes, nothing happens to the bully. Sometimes, the bully is admonished. Sometimes, the bully is prevented from posting for a period of time. Only rarely is the bully removed from the platform. The reporter almost never learns in a timely manner how their complaint was addressed.

Moderators don’t just exacerbate censorship problems, sometimes they ARE the problem. There have been cases in which volunteer moderators actually conspired to attack a certain individual or group of individuals. The moderator or an accomplice would bait someone in a conversation until the discussion turned ugly. Then the “baiter” would report the target and the moderator would ban him. This happens in professional football all the time. One player takes a cheap shot at an opponent and when the opponent retaliates, he is the one who gets penalized.

This type of insider abuse would be more difficult under a customer-driven-censorship system for two reasons. First, customers would be less likely to encounter such bullying because they would be more likely to filter out provocative characteristics like politics. (Remember, incendiary rhetoric can occur even in general topic groups. In the customer-censorship system, posts are characterized, not groups.) Second, customers would be more likely to report any type of bullying behavior because the reporting would be automated (as it is now on many systems), and more importantly, because they understand that their vote will count.

The customer-censorship system makes customers the jury rather than just having an anonymous moderator as a supreme judge.

Statistics could be kept on posts that have been reported, like the numbers of customers reading and reporting the post, and the characteristics that were reported. Business rules could be devised to judge a post based on the number of reports by independent customers for different responses, thus ensuring consistency and timeliness. Furthermore, both the poster and the reporter could be informed of the status of the post automatically.

“Karen” Deterrence

No system of rules, whether written or automated, exists without people trying to abuse them. In this system, some posters would fail to characterize their posts or would characterize them inappropriately. That would result in reports that would eventually get the post deleted and perhaps even get the poster admonished. But, there’s another way the system might be abused. Some individuals, call them Karens, may report other people’s posts inappropriately.

There are a number of ways this could be done.

- A Karen may not filter posts for a particular characteristic, say politics, but then report posts because they have that characteristic.

- A Karen might inappropriately report a post for several violations, not just the one that applies.

- A Karen might inappropriately report several posts from the same poster for violations that may not even apply.

These would be relatively easy to detect with an automated system that could compile statistics on reports. What would be more challenging to detect would be if a Karen created several accounts or assembled accomplices to make invalid reports. That would probably require further analysis.

Final Words

With a customer-driven censorship system, people who wanted to espouse unpopular ideas could do so without being targeted by blanket censorship. Each individual would define what they wanted to censor in their own feeds. Karens wouldn’t be allowed to censor everybody to suit their own sensibilities. People who wanted to try to bridge the ideological gap in the country could do so by manipulating their filters to see more content. More importantly, people who would be offended by the posts wouldn’t see them. It would be like turning off the TV when The View comes on. Because the system would be automated, statistics could be kept on posters and reporters to ensure that the system isn’t abused. Reported posts could be processed more quickly.

I wouldn’t expect that implementing such a system would result in the downsizing of thousands of paid moderators. They’re already overworked and in need of additional staff. Rather, I see an automated censorship system fueled by data from customers to be a way to bring social media into the 21th Century by making it data-driven. It’s worth a try.